Note to Readers: Four years later and there are now suspicions that metal-oxide memristors may have shelf-life issues. Read all about it here.

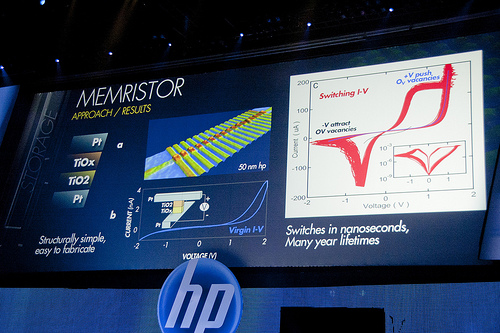

HP’s Memristor Problem

Today I read something that did not surprise me. HP has said that their memristor technology will be replaced by traditional DRAM memory for use in “The Machine”. This is not surprising for those of us who have been in the field since before HP’s memristor marketing engine first revved up in 2008. While I have to admit the miscommunication between HP’s research and business development departments is starting to get really old, I do understand the problem, or at least part of it.

Image by Luke Kilpatrick

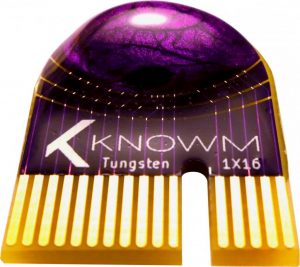

When an organization gets as large as HP they become fragmented. The people who make the decisions at the top do not understand the technology being developed and technical problems being faced at the bottom. Market and company politics create top-down objectives that the people at the bottom try desperately to fulfill. This environment works only for established technology and solutions, because that is the only thing all the people in the organization understand. When it comes to the memristor we have a problem. You can’t force a technology to be something other than what it is–you have to embrace the reality. When an organization gets large and fractured, this reality can get lost in translation as it is passed up the ladder. HP is clearly having issues with their device (for example, see this report from Sandia National Labs and Dr. Campbell’s take on oxide-based devices), but that is not the end of the story for memristors. Since HP’s announcements seven years ago, a number of other material stacks have been developed and demonstrated by Knowm Inc. and others, and we are now just entering the market with discrete devices for neuromorphic computing research and CMOS BEOL Memristor services. To be perfectly honest HP’s announcement is more than a little irritating, and I’m not the only one who feels that way.

If this were 2010, I’d say they must be doing this on purpose to keep computer progression held back, but the problem is HP no longer has a monopoly on memristors. Crossbar is releasing memristors in their own products this year, and that’s not including various other memristor tech such as Nantero’s new technology. Now HP is just screwing themselves. —Yuli-Ban via Reddit.

Memristor are much more than switches. They are a gateway into a whole new way to compute and have a number of applications. Memristors are not one device either–there are many devices each with their own unique properties suited for different applications. As Leon Chua likes to emphasize, a memristor is a “state dependent resistor”. Exactly what sort of internal states a memristor has, and what sort of physics mediates these state, is left open. The result is a massive space of possibilities–and opportunities in computation. By matching the physics of the memristors to our computations, we can unlock a whole new universe in electronics.

The Other Way to Use Memristors

There are two ways to develop memristors. The first way is to force them to behave as you want them to behave. Most memristors that I have seen do not behave like fast, binary, non-volatile, deterministic switches (although our Carbon-Doped SDC Devices are pretty darn good). This is a problem because this is how HP wants them to behave. Consequently a perception has been created that memristors are for non-volatile fast memory. HP wants a drop-in replacement for standard memory because this is a large and established market. Makes sense of course, but its not the whole story on memristors.

Memristors exhibit a huge range of amazing phenomena. Some are very fast to switch but operate probabilistically. Others can be changed a little bit at a time and are ideal for learning. Still others have capacitance (with memory), or act as batteries. I’ve even seen some devices that can be programmed to be a capacitor or a resistor or a memristor. (Seriously). By taking some inspiration from Nature, Knowm collaborator Dr. Kris Campbell has achieved remarkable control over the device properties such as threshold voltages, resistance ranges, switching speeds, data retention, and cycling durability (more on that).

When I first envisioned a variable resistive device back in 2001 I knew it would not be very reliable. This troubled me at first until I had a realization: our brains deal with massive faults all the time. Not only are our neurons constantly dying (to the tune of hundreds of thousand per day), but our synapses don’t even work half the time! And yet our brains work. Really really well. This realization is what led me down a path that resulted in AHaH Computing. In other words, rather than fight Nature I tried to accept and understand it. The result was a re-thinking about my assumption and the discovery of something new.

The Problem of Unreliable Components

I discovered the unsupervised AHaH plasticity rule when I was trying to solve the problem of how to deal with unreliable components in large-scale computing structures, taking inspiration from the brain.

How, in the face of both intrinsic and extrinsic volatility, can unconventional computing fabrics store information over arbitrarily long periods? Here, we argue that the predictable structure of many realistic environments, both natural and artificial, can be used to maintain useful categorical boundaries even when the computational fabric itself is inherently volatile and the inputs and outputs are partially stochastic…We conclude that the stability of long-term memories may depend not so much on the reliability of the underlying substrate, but rather on the reproducible structure of the environment itself, suggesting a new paradigm for reliable computing with unreliable components.

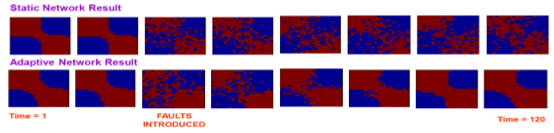

What I discovered was really quite remarkable to me. First I trained a network using an off-the-shelf SVM algorithm. Then I let the network operate unsupervised AHaH plasticity while I subjected it to faults and errors of various kinds. What I saw was a network that spontaneously healed itself. Not only that–it got slightly better on its own without supervised training.

This result demonstrated that the attractor points of the unsupervised AHaH rule coincided with the exact optimal solution to the classification problem. As I damaged the network it would repair itself by making adjustments to the working synapses. It did not matter if some synapses failed or behaved randomly. So long as there was redundancy in the network and the majority of the synapses obeyed AHaH plasticity, the network as a whole would spontaneously heal itself. If the fault rate was below a threshold, AHaH plasticity could continuously heal. Just as we recover from cuts and scrapes and bruises–not only recovering our function but actually getting even better–so too could the AHaH network.

Think about this. If your car is making odd noises and falling apart, your mechanic does not say “you need to give your car more exercise”. And yet this is how it works with you and all other living things. If we could understand how this worked, then the act of using our electronics would make them better. They would literally fix themselves. Chip yields would go up and manufacturing costs would go down. Rather than fail at the first sign of a fault, our electronics would continuously self-repair, increasing reliability. In short, we could make our electronics work more like we do. Less like a machine and more like a natural machine. This is what AHaH Computing is all about and why memristors represents a paradigm shift in computing technology. Many of us know this, and we have known about it for a number of years before HP started marketing memristors.

Methodology for the Configuration and Repair of Unreliable Switching Elements

Three years before HP announced discovery of the memristor, I realized that many devices that behaved like memristors could exist and that the best model for this large variety was not deterministic switches. Rather, it was as collections of non-deterministic switches or ‘meta-stable switches‘. Lacking the ability to fabricate a device myself, I set out on a path of understanding how to use them. I called my solution a “Methodology for the configuration and repair of unreliable switching elements“.

Memristors are for Uniting Memory and Processing

While HP wants to use memristors as a “universal memory” within “The Machine”, there is another way to use them. This way does not require fast binary deterministic switches. It does not require higher fabrication yields than what we can already demonstrate. This other way is called AHaH Computing and the problems that it solves are the same problems that brains solve. In my opinion, non-volatile memory is the least interesting thing about memristors.

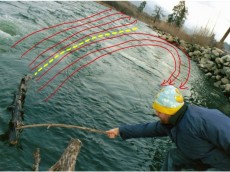

Image by genphyslab

The Solution is Not Difficult–Just Different

The solution to HP’s problem is all around us in Nature, which displays a most remarkable property. The atoms in our bodies recycle in a matter of months. Despite the fact that life is inherently volatile, it can maintain it structure and fight decay so long as energy is dissipated. It is this property of self-repair that is at the heart of self-organization. Just as a ball will roll into a depression, an attractor-based system will inevitable fall into its attractor. Perturbations will be quickly fixed as the system re-converges to its attractor. AHaH plasticity creates attractors, and understanding these attractors and how to exploit them is what AHaH Computing is all about. If your solution can be encoded in AHaH attractors then it will heal itself spontaneously if damaged. If you replace “programing” with “learning” then suddenly the chip can adapt around defects in the chip.

Image by Dave Stokes

The structure of the brain is in large part a reflection of the structure of the information it is processing. Lets consider an analogy to a fast-flowing river. The structure of rapids are created from the water flowing over the stream bed. Countless molecules of water come and go, but the structure of the rapids remains the same. Without the underlying stream-bed, however, the structure would quickly dissipate. The structure of the river-bed is analogous to the structure of information. As the brain processes structured information patterns (and dissipates energy), its internal structure becomes a reflection of the structure of the information. The information for self-assembly and repair is in the data stream. Rather than rely on the the intrinsic reliability of the individual components, AHaH Computing points us toward a new way that couples the physical structure of the chips to the information flows. The act of processing becomes the act of repair and we get, among other things, bottom-up healing.

The solution to HP’s memristor problem is straight-forward, but unfortunately not something a large organization in the grips of massive restructuring is easily capable of: They need to think differently and let the technology do the talking. As Carver Mead, the godfather of neuromorphic computing said:

Listen to the technology; find out what it’s telling you. –Carver Mead

Perhaps when HP quiets down we will all be able to hear what memristors are saying.

Related Resources

- Knowm’s Commercially Available memristors: http://knowm.org/product/bs-af-w-memristors/

- Dr. Kris Campbell’s page on knowm.org: http://knowm.org/teams/kris-campbell/

- The Story of My Memristor – Kris Campbell http://knowm.org/the-story-of-my-memristor-kris-campbell/

- Knowm Collaborates with Kris Campbell at BSU: http://knowm.org/knowm-collaborates-with-kris-campbell-at-bsu/

10 Comments

Lozi

The general theory of first, second, third order memristor, etc (first order memristors is the genuine memristor discovered by professor Leon O. Chua in 1971) is now completed (along with any order meminductor, memcapacitor) and published in an article I wrote with him, in International Journal of Bifurcation and Chaos (IJBC) Issue September 2014.

This paper is freely available on the site: https://www.researchgate.net/publication/261676241_MEMFRACTANCE_A_MATHEMATICAL_PARADIGM_FOR_CIRCUIT_ELEMENTS_WITH_MEMORY

The Old Ohm’s law is generalized and fitted to those memory elements.

Alex Nugent

Lozi–Looks like great work! Do you have any data showing modeling of devices using your theories? We are very interested in efficient and accurate generalized models of memristors that embrace the complexity of real-world devices.

Tim Molter

@Lozi Great paper so far. I still have a ways to go to get through it all. I noticed that you credit HP with reporting the first physical memristor in 2008, but there were several before 2008. HP was the first to call their device a memristor though and market it as such.

lozi

Thank you for your comment and references Tim. I will use in fothcoming papers. a friend of mine published a paper with an example dating XIXe century.

lozi

many Thanks Alex for your comment. I am only mathematician, I need to discuss with people having such great expenrience you have ! I meet Prof Chua next week in Dublin I will ask him about your question.

René

Alex Nugent

Lozi–wonderful! Just to let you and Prof Chua know, we are working closely with Dr. Kris Campbell at BSU and have three new material stacks formulated for AHah computing, one of which we will be packaging for research use and should be available in ~3 months.

lozi

Ok thank you

René

Sean O'Connor

It’s bad news for HP. I don’t know whether senior management where led up the garden path, or led themselves up to see the fairies.

Ted Ferguson

From what I remember about a HP presentation on “The Machine”, my favorite part was the division of information. Photons being used to transmit the information. Electricity to calculate and compute. Ions being used to store bits of information. It seemed very forward looking and progressive. You are certainly right though Alex, HP is fragmented and won’t be as effective because of it.

A perovskite memristor with three stable resistive states | FrogHeart

[…] should you find the commercialization aspects of the memristor story interesting, there’s a June 6, 2015 posting by Knowm CEO (chief executive officer) Alex Nugent waxes eloquent on HP Labs’ […]