A Plethora of Tools for Machine Learning

When it comes to training computers to act without being explicitly programmed there exist an abundance of tools from the field of Machine Learning. Academics and industry professionals use these tools for building a number of applications from Speech Recognition to Cancer Detection in MRI scans. Many of these tools exist freely available on the web. If you’re interested I have compiled a ranking of these (see the bottom of this page) along with an outline of some important features for differentiating between them. Specifically, the language the tool is written in, a description taken from the home website for each tool, the focus towards a particular paradigm in Machine Learning and some notable uses in academia and industry.

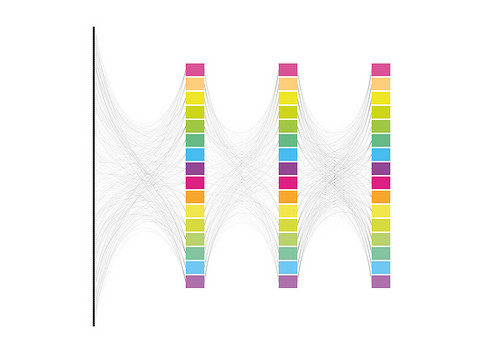

Researchers may use many different libraries at a time, write their own, or not cite any particular tool, so quantifying the relative adoption of each is difficult. Instead a Search Rank is given reflecting the comparative magnitude of Google searches for each tool in May. The score is not necessarily reflective of wide spread adoption but gives us a good indication of which are being used. Note* vague names like “Caffe” were evaluated as “Caffe Machine Learning” to be less ambiguous.

Image by xdxd_vs_xdxd

I have included a distinction between two Machine Learning sub-fields Deep and Shallow Learning which has become an important split over the last couple of years. Deep Learning is responsible for record results in Image Classification and Voice Recognition and is thus being spearheaded by large data companies like Google, Facebook, and Baidu. Conversely, Shallow Learning methods include a variety of less cutting edge Classification, Clustering and Boosting techniques like Support Vector Machines. Shallow learning methods are still widely in use in fields such as Natural Language Processing, Brain Computer Interfacing, and Information Retrieval.

Detailed Comparison of Machine Learning Packages and Libraries

This table also includes information about the particular tools support for working with a GPU. GPU interfacing has become an important feature for Machine Learning tools because it can accelerate large scale matrix operations. The importance of this to Deep learning methods is apparent. For instance At the GPU Tech Conference in early May 2015 39 of 45 talks given under Machine Learning were about GPU accelerated-Deep Learning applications, these came from 31 major tech companies and 8 universities. The appeal reflects the massive speed improvements in GPU assisted training of Deep Networks and is thus an important feature.

Information about the tools ability to distribute computation across clusters through Hadoop or Spark is also given. This has become an important talking point for Shallow Learning techniques which suit distributed computation. Likewise distributed computation for Deep Networks has also become a talking point as new techniques have been developed for distributed training algorithms.

Lastly some additional notes are attached about the varying use of these tools in academia and industry. What information exists was gathered by searching Machine Learning publications, presentations and distributed code. Some information was also supported by researchers at Google, Facebook and Oracle so many thanks to Greg Mori, Adam Pocock, and Ronan Collobert.

The results of this research show that there are a number of tools being used at the current moment and that it is not yet quite certain which will win the lions share of use in industry or across academia.

| Search Rank | Tool | Language | Type | Description “quote” | Use | GPU acceleration | Distributed computing | Known Academic use | Known Industry Use |

|---|---|---|---|---|---|---|---|---|---|

| 100 | Theano | Python | Library | Numerical computation library for multi-dimensional arrays efficiently | Deep and shallow Learning | CUDA and Open CL, cuDNN | Not Yet | Geoffrey Hinton, Yoshio Bengio, and Yann Le Cunn | Facebook, Oracle,Google and Parallel Dots |

| 78 | Torch 7 | Lua | Framework | Scientific computing framework with wide support for machine learning algorithms | Deep and shallow Learning | CUDA and Open CL, cuDNN | Cutorch | NYU, LISA LABS,Purdue e-lab, IDIAP | Facebook AI Research, Google Deep Mind, certain people at IBM and several smaller companies |

| 64 | R | R | Environment/ Language | Functional language and environment for statistics | Shallow Learning | RPUD | HiPLAR | ||

| 52 | LIBSVM | Java and C++ | Library | A Library for Support Vector Machines | Support Vector Machines | CUDA | Not Yet | Oracle | |

| 34 | scikit-learn | Python | Library | Machine Learning in Python | Shallow Learning | Not Yet | Not Yet | ||

| 28 | MLLIB | C++, APIs in JAVA, and Python | Library/API | Apache Spark’s scalable machine learning library | Shallow Learning | ScalaCL | Spark and Hadoop | Oracle | |

| 24 | Matlab | Matlab | Environment/ Language | High-level technical computing language and interactive environment for algorithm development, data visualization, data analysis, and numerical analysis | Deep and Shallow Learning | Parallel Computing Toolbox (not-free not-open source) | Distributed Computing Package (not-free not-open source) | Geoffrey Hinton, Graham Taylor, other researchers | |

| 18 | Pylearn2 | Python | Library | Machine Learning | Deep Learning | CUDA and Open CL, cuDNN | Not Yet | LISA LABS | |

| 14 | VowPal Wabbit | C++ | Library | Out-of-core learning system | Shallow Learning | CUDA | Not Yet | Sponsored by Microsoft Research and (previously) Yahoo! Research | |

| 13 | Caffe | C++ | Framework | Deep learning framework made with expression, speed, and modularity in mind | Deep Learning | CUDA and Open CL, cuDNN | Not Yet | Virginia Tech, UC Berkley, NYU | Flicker, Yahoo, and Adobe |

| 11 | LIBLINEAR | Java and C++ | Library | A Library for Large Linear Classification | Support Vector Machines and Logistic Regression | CUDA | Not Yet | Oracle | |

| 6 | Mahout | Java | Environment/ Framework | An environment for building scalable algorithms | Shallow Learning | JCUDA | Spark and Hadoop | ||

| 5 | Accord.NET | .Net | Framework | Machine learning | Deep and Shallow Learning | CUDA.net | Not Yet | ||

| 5 | NLTK | Python | Library | Programs to work with human language data | Text Classification | Skits.cuda | Not Yet | ||

| 4 | Deeplearning4j | Java | Framework | Commercial-grade, open-source, distributed deep-learning library | Deep and shallow Learning | JClubas | Spark and Hadoop | ||

| 4 | Weka 3 | Java | Library | Collection of machine learning algorithms for data mining tasks | Shallow Learning | Not Yet | distributedWekaSpark | ||

| 4 | MLPY | Python | Library | Machine Learning | Shallow Learning | Skits.cuda | Not Yet | ||

| 3 | Pandas | Python | Library | Data analysis and manipulation | Shallow Learning | Skits.cuda | Not Yet | ||

| 1 | H20 | Java, Python and R | Environment/ Language | open source predictive analytics platform | Deep and Shallow Learning | Not Yet | Spark and Hadoop | ||

| 0 | Cuda-covnet | C++ | Library | machine learning library for neural-network applications | Deep Neural Networks | CUDA | coming in Cuda-covnet2 | ||

| 0 | Mallet | Java | Library | Package for statistical natural language processing | Shallow Learning | JCUDA | Spark and Hadoop | ||

| 0 | JSAT | Java | Library | Statistical Analysis Tool | Shallow Learning | JCUDA | Spark and Hadoop | ||

| 0 | MultiBoost | C++ | Library | Machine Learning | Boosting Algorithms | CUDA | Not Yet | ||

| 0 | Shogun | C++ | Library | Machine Learning | Shallow Learning | CUDA | Not Yet | ||

| 0 | MLPACK | C++ | Library | Machine Learning | Shallow Learning | CUDA | Not Yet | ||

| 0 | DLIB | C++ | Library | Machine Learning | Shallow Learning | CUDA | Not Yet | ||

| 0 | Ramp | Python | Library | Machine Learning | Shallow Learning | Skits.cuda | Not Yet | ||

| 0 | Deepnet | Python | Library | GPU-based Machine Learning | Deep Learning | CUDA | Not Yet | ||

| 0 | CUV | Python | Library | GPU-based Machine Learning | Deep Learning | CUDA | Not Yet | ||

| 0 | APRIL-ANN | Lua | Library | Machine Learning | Deep Learning | Not Yet | Not Yet | ||

| 0 | nnForge | C++ | Framework | GPU-based Machine Learning | Convolutional and fully-connected neural networks | CUDA | Not Yet | ||

| 0 | PYML | Python | Framework | Object oriented framework for machine learning | SVMs and other kernel methods | Skits.cuda | Not Yet | ||

| 0 | Milk | Python | Library | Machine Learning | Shallow Learning | Skits.cuda | Not Yet | ||

| 0 | MDP | Python | Library | Machine Learning | Shallow Learning | Skits.cuda | Not Yet | ||

| 0 | Orange | Python | Library | Machine Learning | Shallow Learning | Skits.cuda | Not Yet | ||

| 0 | PYMVPA | Python | Library | Machine Learning | Only Classification | Skits.cuda | Not Yet | ||

| 0 | Monte | Python | Library | Machine Learning | Shallow Learning | Skits.cuda | Not Yet | ||

| 0 | RPY2 | Python to R | API | Low-level interface to R | Shallow Learning | Skits.cuda | Not Yet | ||

| 0 | NueroLab | Python | Library | Machine Learning | Feed Forward Neural Networks | Skits.cuda | Not Yet | ||

| 0 | PythonXX | Python | Library | Machine Learning | Shallow Learning | Skits.cuda | Not Yet | ||

| 0 | Hcluster | Python | Library | Machine Learning | Clustering Algorithms | Skits.cuda | Not Yet | ||

| 0 | FYANN | C | Library | Machine Learning | Feed Forward Neural Networks | Not Yet | Not Yet | ||

| 0 | PyANN | Python | Library | Machine Learning | Nearest Neighbours Classification | Not Yet | Not Yet | ||

| 0 | FFNET | Python | Library | Machine Learning | Feed Forward Neural Networks | Not Yet | Not Yet |

Help us Make the Bridge to Neuromemristive Processors

Knowm Inc is focused on the development of neuromemristive processors like kT-RAM. As machine learning pioneers like Geoffrey Hinton know only too well, machine learning is fundamentally related to computational power. We call it the adaptive power problem, and to solve it we need new tools to usher in the next wave of intelligent machines. While GPUs have (finally!) enabled us to demonstrate learning algorithms that approach human-levels on some tasks, they are still a million to a billion times less energy and space efficient than biology. We are taking that gap to zero.

We are interested to know what packages, frameworks and algorithms people solving real-world machine learning problems find most useful so we can focus our effort an build a bridge to kT-RAM and the KnowmAPI. Let us know by leaving a comment below or by contacting us.

Misc. References

- Bryan Catanzaro Senior Researcher, Baidu” Speech: The Next Generation” 05/28/2015 Talk given @ GPUTech conference 2015

- Dhruv Batra CloudCV: Large-Scale Distributed Computer Vision as a Cloud Service” 05/28/2015 Talk given @ GPUTech conference 2015

- Dilip Patolla. “A GPU based Satellite Image Analysis Tool” 05/28/2015 Talk given @ GPUTech conference 2015

- Franco Mana. “A High-Density GPU Solution for DNN Training” 05/28/2015 Talk given @ GPUTech conference 2015</a

- Hailin Jin. “Collaborative Feature Learning from Social Media” 05/28/2015 Talk given @ GPUTech conference 2015

- Noel, Cyprian & Simon Osindero. “S5552 – Transparent Parallelization of Neural Network Training” 05/28/2015 Talk given @ GPUTech conference 2015

- Rob Fergus. “S5581 – Visual Object Recognition using Deep Convolution Neural Networks” 05/28/2015 Talk given @ GPUTech conference 2015

- Rodrigo Benenson ” Machine Learning Benchmark Results: MNIST” 05/28/2015

- Rodrigo Benenson ” Machine Learning Benchmark Results: CIFAR” 05/28/2015

- Tom Simonite “Baidu’s Artificial-Intelligence Supercomputer Beats Google at Image Recognition” 05/28/2015

Leave a Comment