Introduction

Knowm partner Hillary Riggs and I had the great pleasure of attending the Navy Karles Invitational Conference on Neuroelectronics, hosted by the Naval Research Laboratory in Washington DC from August 18-19, 2015. It was a small and short event, packed with some jaw-dropping presentations. The event is held in honor of a remarkable couple, 1985 Nobel Laureate in Chemistry Dr. Jerome Karle and 1993 Bower Award Laureate and 1995 National Medal of Science recipient Dr. Isabella Karle. The pair were instrumental in the development of X-Ray crystallography, and both had long and distinguished careers with the Naval Research Laboratory, culminating with the Distinguished Civilian Service Award, the Navy’s highest recognition for civilian employees. Dr. Isabella Karle was in attendance both days, sitting in the front row.

This year’s conference was on the topic of ‘neuroelectronics’ and explored a range of topics including brain imaging, brain interfacing and neuromorphic computing. The first day was dedicated mostly to brain imaging and interfacing, and the second day mostly to neuromorphic computing. I was there in large part for the second day, but I was blown-away from the advances being made in the imaging and interfacing fields.

Day One

We started with an overview from the White House on the Presidents BRAIN Initiative from Dr. Monica Basco, a report of some work at DARPA by program manager Dr. Justin Sanchz, some general comments on neuroscience, neuromorphic computing and the BRAIN Initiative by Dr. Terrence Sejnowski. The conference then set a technical track and we dove into the current state of neuroelectronics. Every talk was great, but here are the highlights from my perspective.

Dr. Stephen Furber

Dr. Furber gave an overview of the SpiNNaker project. There was some overlap with his presentation at the NICE conference earlier in the year, as one would expect, but also some new updates on the project–particularly on the software side, and some historical notes on Alan Turing. The SpiNNaker Project is a truly impressive feat of computing engineering–a massive network of 100,000 (soon to be a million) ARM processor cores interconnected with an lightweight packet-switched communications fabric. I had the opportunity to speak with Dr. Furber and express my admiration for building such a system, and talk about his history co-designing the first ARM processors. Going from chips to boards to server racks and now adding the software layer is a significant accomplishment. With the hardware in place and the software taking shape, this project is getting ready to deliver some interesting results and I am very excited to see what unfolds.

The Baroness Susan Greenfield

Most scientists could best be described as a tad introverted, shy, and dense. Dr. Greenfield is not a normal scientist! She has rather impressive oratory skills, and in addition to elaborating on her theories on Alzheimers, she used her talk to discuss something that I believe we all need to have a frank conversation about. Our modern technological age is creating a generation of minds addicted to the internet and computers. The technological lifestyle is literally changing the minds of people, manifesting itself with short attention spans, recklessness, poor empathy, fragile identities and adversarial outlooks. She has an upcoming book called “Mind Change” that I plan on reading.

Dr. Marcel Adam Just

This talk blew my mind. The implications of Dr. Just’s work are profound for multiple reasons, but let’s just get right to it: we can now read a person’s thoughts though a combination of fMRI and machine learning. The first implication is perhaps best summarized by a question and answer at the end of the talk.

Audience Member: “Could this technology be used for lie detection”

Dr. Just: “well, if you can read what somebody is thinking, you don’t have to detect lies”.

While your brain is chewing on the implications of this, let me jump to the less-obvious but profound implication about what this reveals about brain structure. The most surprising thing about this, in my opinion, is that it works at all. What Dr. Just and his group are doing amount to creating a neural heat-map with FRI and using machine learning to find the underlying components inherent in thought. For example, when somebody thinks about an “orange”, for example, their brains activate representations for a color, and a shape, and a fruit, and other associations. By identifying these “thought components” and using machine learning, they can predict with high accuracy what object the person is thinking about. They have gone further with this, decomposing human emotion into a “basis set” of three components that they call “Valence” (is it good or bad), “Arousal” and “Social Interaction”. This really resonated with me, as it bears resemblance to work I did a number of years back in regard to linking reward signals and emotion, what I called “emotional memory”. My thought was that emotion manifested during changes in reward levels. If each reward channel could express two dimensions of emotion (is it rising or falling), and we have three reward “centers”, then we would have 8 primary emotions for each combination. I figured these might be something like “Energy”, “Novelty” and “Social”.

Perhaps the most interest implication about Dr. Just’s work is what it says about the structure of the human brain and learning. We know that the human brain is highly plastic but has genetically determined large-scale connectivity. What really surprises me is that the exact same places of a brain are active for the same abstract concepts from one person to the next. That is, when I think of an “orange” and parts of my cortex light up corresponding to shape, color, texture, etc…the exact same places in your brain light up to represent the same abstract concepts! It would appear that while the human brain must learn these concepts, the location for where the learning occurs is genetically determined.

Dr. Timothy Harris

Dr. Harris had to be the most up-beat and cheerful person at the conference, and he had good reason to be. Harris is doing probably one of the most important things to promote the advancement of neuroscience: His team is designing and fabricating advanced neural probes and making them available to the research community at-cost. People often forget that to see farther than others requires the appropriate tools — and those who make the tools available and affordable are the ones who really change the world.

Day 2

Dr. Kwabena Boahen

You know you are in the presence of a smart guy when he can take complicated ideas and make them simple. This is essentially what Dr. Boahen did, comparing the scaling problem of CMOS transistors to traffic. He showed that as CMOS transistors are scaled down, and the number of electron ‘lanes’ get close to one, the devices exhibit extremely high magnitude noise due to individual electrons becoming trapped in local energy minima (pot holes) and blocking the traffic. In essence, when the transistors are large, any “stuck electrons” are easily bypassed and their contribution to the total current is minimal. However, when the devices approach the natural ‘lane width’ of an electron (about 2.7 nm if a remember correctly), a stuck electron could reduce the current by 50% or even shut down the whole device. The answer to these problems, Dr. Boahen believes (and I agree), lie in distributed fault-tolerant analog architectures inspired by the brain. Dr. Boahen also discussed his last creation, Neurogrid, its application to a robotic arm encoder, and hinted at a new project call “Brainstorm”.

Dr. Jennifer Hasler

Dr. Hasler’s presentation focused on her recent work elucidating a ‘neuromorphic roadmap’. She has shown how modern digital computing has hit an efficiency wall of about 10MM AC/s/mW (Million Multiply Accumulates per second per milliwatt) for 32 bit multiply-accumulates. She emphasized the importance of co-locating memory and computation, and we at Knowm Inc. of course agree with–so much so that we have proposed uniting the two via our technology stack. Dr. Hasler brings a great deal of insight and experience to the field of neuromorphic computing, starting with the co-invention of the single-transistor synapse with neuromorphic godfather Carver Mead.

Dr. Narayan Srinivasa

It was good to see Dr. Srinivasa again. I remember fondly sitting through the 12-hour long site reviews at HRL, watching presentation after presentation from the impressive researchers working under his guidance in the SyNAPSE program (like Dr. Hasler). In this presentation, Dr. Srinivasa discussed the end results of that effort, a neuromorphic chip capable of on-chip STDP learning. He showcased a recent demonstration of the chip coupled to a drone, learning ‘environmental patterns’ in one journey through a room. It was unclear if the chip itself was used for drone control, or how those environmental patterns where employed toward a task. However, he did show the chip learning a basis set, showing its response to changes in data distributions. It would be nice to see any sort of benchmark against well-known machine learning methods on standardized benchmarks.

Dr Kaushik Roy

Dr. Roy spoke of his work with the emerging technology of Lateral Spin Valves (LSV) to emulate neuron function, and in some cases synapses. The use of LSVs offer some advantages due to lower power consumption compared to CMOS for neuron thresholding functions, although they introduce a few constraints that make them less suitable for synapses.

Dr. Bryan Jackson

I was pleased to see Dr. Jackson again. He gave a good overview of True North, the massive neuromorphic chip his group designed and built under the DARPA SyNAPSE program. Dr. Jackson was filling in for Dr. Dharmendra Modha who was busy hosting their ‘boot camp’ training session. He showcased a few results, such as pedestrian and automobile identification in streaming video as well as run-time power metrics. He made it clear that the recent Wired article was a mis-representation, as the multiple single-board computers with True-North chips were not linked to form a “rat brain”, but rather were just all sitting on a network and available for the boot-camp participants. I was very glad to see some primary performance benchmarks on MNIST. They apparently have two ways of training their spike-based networks which they call “Train and Constrain” or “Constrain and Train”. The first method is to train a typical floating-point network with existing deep learning methods and then convert this to spikes. The second method is to find new methods to train within the spike space. He noted this was much more efficient, but he did not disclose how it was done other than to indicate it was a form of error back-propagation and did not appear to be based on unsupervised feature learning or stacked auto-encoders. One must keep in mind that this learning is not occurring on True North, as the chip is not capable of on-chip learning.

True North MNIST Benchmarks

| Cores | Synapses | Accuracy |

|---|---|---|

| 5 | 327,680 | 92% |

| 30 | 1,966,080 | 99% |

| 1920 | 125,829,120 | 99.6%? |

A few things jump out from these metrics. The first and perhaps most informative is the 5-core 92% result. This is important because 92% is easily achieved by thresholding the raw pixels and using a linear classifier on the resulting 784 element binary vector. 327,680 synapse to achieve primary performance parity with a linear classifier seems a bit weak, but this could likely be due to a more complex spike encoding method. As they formed committees of networks (a common trick in machine learning), they were able to achieve very nice run time primary performance–at the expense of over 125 million synapses and about half of the chip cores. Yann Lecun, a pioneer in machine learning, has some rather blunt comments on this.

One part of Dr. Jackson’s talk stood out and highlights one of the struggles researchers from lesser name-brand organizations face. In the start of the talk he highlighted how Wired’s recent article got a number of facts wrong. He then later in his presentation used examples of press to say “You don’t have to believe us that our chip is awesome…just listen to them” pointing to his slide showing Scientific American and other well-known publications.

The only thing that matters are primary and secondary performance benchmarks. After seven years I am glad to see some numbers finally coming out!

Dr. Stanley Williams

Dr. Williams’ talk was an interesting “back to basics” talk, showcasing their work with what they call the “neuristor”. He shared a number of very interesting theoretical insights and connections, speaking highly of the work of Dr. Leon Chua, and showed data from tests with the neuristor. He highlighted how amazingly rich dynamical behavior can be generated from such a simple device, and proposed it as a good test bed for studying edge of chaos behavior.

Dr Viviana Gradinaru

Most of Dr. Gradinaru’s talk was way out of my area of expertise, but the basics of her work with CLARITY are fairly straight forward. The basic problem is that its hard to see into brains. We would ideally like to monitor the state of thousands or millions of neurons in real-time in a waking animal. But the brain is an opaque brown goo. No problem for Dr. Grandinaru–she has found a way via Optogenetics to render a brain transparent. When coupled to florescence techniques, it becomes possible to peer directly into a brain while it is working. Amazing!

Dr. Richard Anderson

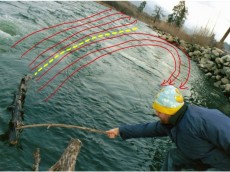

Dr. Anderson’s work gave me goose-bumps. He showcased his group’s work implanting cortical microelectrode arrays into multiple regions of the human cortex specialized for grasping and reaching. The result? Tetraplegic patients controlling robotic arms, picking up objects, pointing to objects with astounding fluidity and accuracy. While this work blurs the lines between man and machine, and in so doing raises some rather difficult ethical issues of human-mind-controlled machines, there is one thing that is undeniably clear: the expressions of pure joy coming from the paralyzed patients as they are able to control an arm. One man’s desire was to drink a beer on his own — and he did it. To me, that speaks volumes about the potential of the technology.

General Comments on Neuromorphics

The conference was small but had a good representative sampling of leading neuromorphic researchers and their work. I was of course familiar with most of it, and my criticism should not be taken separately from my overwhelming agreement with the field as a whole and my admiration for the accomplishments of those who presented.

Insufficient or Incomplete Tool Set

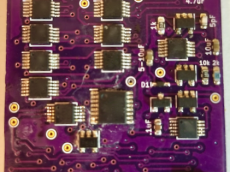

The tools historically available to chip designers have not been suitable for efficient on-chip learning. Dr. Boahen combines analog and digital method in CMOS electronics, which has no efficient synapse analogs. Dr. Hasler pioneered the single-transistor synapse, but a closer look at this structure shows that it requires high voltages for adaptations, has limited cycle endurance, and is better thought of as an analog programmable synapse. Dr. Jackson and IBM go full-digital, taking advantage of IBM’s access to state of the art fabs and over $53 million in government funding, but have lost learning in the process. I can only conclude that the reason such smart and well-funded groups fail to deliver a learning chip solution is that they must lack the proper key tools. I believe this tool is <a href=”/product/sdc-tungsten-1×16-memristor-array/>a CMOS compatible bi-directional incremental voltage-dependent memristor with low (voltage) thresholds of adaptation. This is why Knowm Inc. is strongly focused on acquiring and optimizing such technology.

Inability to Recognize Adaptation/Plasticity and Learning Algorithms as Different Things

Dr. Jackson defended True North’s programmatic/non-learning approach to me by saying that learning algorithms are constantly changing and, if they had gone the route of including learning in True North, they may have used Support Vector Machines, since at the time they were the most popular. I agree with this in part, although I would challenge the notion that a learning algorithm and adaption are the same thing. A chip capable of adaptation is not necessarily a chip constrained to learning in only one way. We have only come to this understanding through our work with AHaH Computing and discovering that we can implement a number of algorithms on top of a more generic “adaptive synapse substrate”. Learning algorithms become specific instruction set sequences or routines, where each operation results in Anti-Hebbian or Hebbian learning. At the lowest level, Anti-Hebbian just means “move the synapse toward zero” and Hebbian means “move it away from zero”. People have come to the (mis) conception that we are only working with a ‘local’ or ‘fixed’ learning rule. On the contrary, we have defined an instruction set from which the local unsupervised rule is just one possibility, which does not preclude global computations. The space of AHaH based learning algorithms is very large!

Almost Complete Lack of Primary Performance Benchmarking

The only(!!) primary performance benchmark I saw in all the neuromorphic presentations came from Dr. Jackson’s MNIST benchmark result. This is profoundly troubling to me, as I see almost no point in building neuromorphic chips aside from their real world commercial value. I’m sure many in the neuromorphic field will disagree with my stance on this, but I also feel most of the rest of the world sides with me. We need to see way more emphasis on primary performance benchmarking and honest comparisons with standard off-the-shelf machine learning algorithms. Neuromorphic computing maintains great promise as a means to solving real-world problems, but until it shapes up and gets its priorities focused on primary performance benchmarks, its going to remain an expensive luxury for a few well-funded organizations

Leave a Comment