It is a curious fact that there is no general agreement on what intelligence actually is. Most of us recognize that humans appear to possess a large amount of it, as well as other animals — both as individuals and as groups or swarms. Plants have been shown to display remarkable capabilities in their ability to communicate with each other, mount chemical defenses and search the soil and air for resources. The space of what intelligence could be is so large that we must find some way to collapse the search space–to focus on a smaller subset of possibilities where we believe a solution can be found. For many decades (centuries?) humans have done this. Two dominant approaches today include Machine Learning (ML) and Neuromorphics. Each method can be decomposed into sub-types with specific focuses, and there is increasingly more cross-over between methods and in some cases very different approaches point to similar answers. The solutions to machine intelligence could come out one or more approaches, or even from a radically new direction out of the blue. Wherever it comes from, it is important for the public and funding agencies to be able to recognize it before it arrives or, at the very least, once it has arrived.

Image by Hunter-Desportes

Toward that end, I offer below some of my personal lessons-learned. I come from the perspective of somebody trying to solve the hard problem of intelligence over the last 12 years under difficult constraints, but also from having had the privilege of watching many others attempt it. I’ve begun to notice patterns and what I perceive to be pitfalls.

Quantify Utility and Ease of Use

The single most important property of an AI technology is its utility. How easy is it for somebody with average intelligence to use the AI to solve a problem? Note that an extremely sophisticated technology that provides exceptionally high performance metrics might be so complicated that only a single person (who has dedicated their life to it) can operate or understand it. In this case the technology is close to useless because nobody else can use it. On the other hand, a technology that works “out of the box” and generally solves a wide range of problems at modest to high performance metrics with only a few hours of operator training is very useful.

The utility of a technology is ultimately evaluated in the marketplace. Simple, robust, effective and affordable solutions generally win out, but not always. The challenge for funding agencies is identifying such technology before it is commercialized. Once a technology exists, clear and quantitative measures should be created to assess its usability. I believe the single most important metric for an AI technology to be something like “human-education-hours until deployed solution”. That is, an AI technology that can be brought to bear on a problem by a person with a high-school diploma in 6 hours is much more useful that one that requires a PhD the same amount of time. The lower the barrier, the better it is–provided of course that it solves problems effectively.

Define Primary and Secondary Performance Metrics

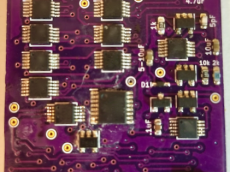

The purpose of technology is to solve a problem in the world. How well it solves this problem should be measured by a primary performance metric. Consider a fancy neuromorphic chip. It may have many applications such as machine vision, speech recognition, and natural language processing. In this case we need at least three primary metrics to access these capabilities. The power, space and weight is a secondary performance metric. A chip that is exceptionally space and power efficient but does not solve a primary problem is useless!

| Primary | Secondary | Application Area |

|---|---|---|

| Peak F1 | Speed | Multi-Label Pattern Classification |

| Object Acquisition | Energy/Time Used | Robotic Control System |

| Fitness | Time until Solution | Combinatorial Optimization |

A primary performance metric is non-negotiable. If you are building a classifier then you must measure its classification performance. If you are building a robot to pick up an object, you have to measure its ability to pick up objects. Any AI technology that cannot be measured on one or more primary performance metrics cannot be evaluated against competing technologies and is, to be blunt, useless.

Tradeoffs between primary and secondary performance benchmarks occur all the time. However, secondary metrics are only important if primary metrics have been achieved. A chip claiming very high power efficiency must also demonstrate acceptable primary performance metrics, otherwise its power-efficiency claim is meaningless and only serves as a distraction from its limitations. In the field of Neuromorphics I have witnesses a tremendous amount of wasted energy on ideas that could have been evaluated–and dropped or (better yet) improved–based on computer simulations on simple primary performance benchmarks.

Recognize Appeals to Biological Realism as a Secondary and Largely Unimportant Performance Metric

The field of Neuromorphics suffers from this problem, and it is a serious problem. Researchers will proudly demonstrate how ‘biologically realistic’ their system is. How detailed their models are, how close to monkey cortex their connection topologies are, how many forms of plasticity they have incorporated, how many compartments in their neurons, how complex their dendritic trees, all the way down to ion channels and gene regulation. The list is almost endless because the complexities of biology are almost endless. Absolutely none of those complexities get us closer to solving a primary performance metric. When confronted with this fact, many will make an “appeal to science”. That is, they will claim they are after a deeper understanding of the biological brain and not a solution to practical AI. Really?

Modern silicon computing substrates have almost no similarity to brains and the modern technological environment does not resemble the environment brains evolved in. Our technological solutions to intelligence are not going to look like a biological brain. I am quite confident in this prediction. We must of course take inspiration from biology and Nature, after all — where else are we going to get it? In the world of technology the only things that matter are what you can actually build and sell and how well it compares to other solutions. The solution to AI will emerge from these constraints.

Image by KimManleyOrt

Understand that Learning is Synonymous with Intelligence

It goes without saying that the field of machine learning is about learning. Learning, or perhaps more generally adaptation or plasticity, enables a program to adapt based on experience to attain better solutions over time. This is true across all domains of intelligence including perception (feature learning, classification, etc), planning (combinatorial optimizers, classification, etc) and control (motor actuation, reinforcement learning, etc). The field of neuromorphic electronics has struggled to implement learning processors for a couple reasons. First, the tools at our disposal are lacking. Transistors are very useful, but they are not intrinsically adaptive in the way synapses (and many other manifestations of life) are. Consequently, building learning synapses can be difficult and convoluted. Second, what form of adaptation does a hardware designer choose to implement? There appears to be dozens of types of plasticity in a neuron, so arbitrarily picking one does not seem like a good solution (although this is exactly what many do). While I believe Knowm Inc has answers to these challenges, the bigger issue is that an AI processor that cannot learn cannot be intelligent.

A simple thought experiment illustrates this fact. Take processor A–which can learn continuously, and processor B–which can be programmed into a state but cannot learn. Now make these processors compete in some way. Who wins? The only situation where processor B will be a consistent victor is in an environment that is not changing. If the game is simple and processors A and B are initialized into the Nash Equilibrium then it is likely that processor B will win. This is because processor A will undoubtedly make some mistakes as it attempts to find a better solution, which there is not, and will suffer a lose because of this. However, in the case where the game or environment is complex and always changing–which describes most of the real-world–the learning processor is going to consistently beat the non-learning processor. As the saying goes, “victory goes to the one that makes the second-to-last-mistake”. If you can’t learn from your (or your competitors) mistakes you are doomed in the long run against a competitor that can. As Nick Bostrom said clearly in his book Superintelligence: Paths, Dangers, Strategies:

“It now seems clear that a capacity to learn would be an integral feature of the core design of a system intended to attain general intelligence, not something something to be tacked on later as an extension or an afterthought”

Don’t be Impressed with Complexity

It is extremely hard to make ideas simple, but it is the simple ideas that change the world. The best thinkers in the world emphasis over and over again the importance of simplicity:

“If you can’t explain something simply, you don’t know enough about it.” –Albert Einstein

“If you can’t explain something to a first year student, then you haven’t really understood it.” –Richard Feynman

“It always worked out that when I understood something, it turned out to be simple” –Carver Mead

However good this sounds, the unfortunate reality is that simple ideas are dangerous for researchers trying to find them. C.A.R. Hoar said of software design:

“There are two ways of constructing a software design: One way is to make it so simple that there are obviously no deficiencies and the other way is to make it so complicated that there are no obvious deficiencies. The first method is far more difficult.” –Tony Hoare

In other words, simple ideas are both hard to find and simple to verify. This leads to big problems when researchers are competing for funds. The person who presents a simple solution faces a discouraging scenario where it becomes easy for others (his competing researchers) to poke holes in the idea. Since it is very hard to find simple ideas, but simple ideas are easy to evaluate, it becomes statistically likely that a researcher presenting a simple idea will make at least one or more mistakes–and that others will be able to spot the mistakes. The researcher proposing a complicated idea faces less criticism because nobody can really understand if they are right or wrong. In an almost ironic twist, the researcher with the complex ideas sometimes like to show off just how complex their solutions are, as if to say “I am so smart I can understand this complex thing”. The reality is that solutions evolve from complex to simple, and that complexity is a sign of a poor and immature solution.

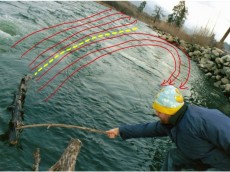

Recognize that Technological Solutions Evolve from Complex to Simple

It is hard to find the shortest path in the traveling salesmen problem but easy to find longer more convoluted paths. In the same way, solutions tends to arrive in a complex form at first and get simpler over time as more thought is put into them. As engineers (and especially programmers) get older they learn this lesson. Rather than jump into building the first thing they think of, they dwell on the idea and give it more brain-time. The longer you think about a problem, the more constraints you can consider and the better solutions get.

Technological solutions require combining many pieces together in new ways. The possible combinations are immense, and finding ways to efficiently search the space of combinations is the heart of innovation. Quick-and-dirty solutions can be better than more thought out solutions–but only under bounded time and resource constraints. Elegant, simple solutions tend to be ‘synergistic’, solving more constraints with fewer parts and costing less. When one finds a simple solution, the general feeling is stupidity: “how the heck did I not see this simple solution before!”. Others tend to reinforce this thought by saying “well if it is that simple somebody else must surely have thought of it”. Time after time, people forget that in the world of technology, ‘simple is hard’ and ‘complex is simple’.

Consider the Constraints of the Researcher

The field of AI is occupied by people and institutions with vastly different amounts of resources. Access to resources like computational power can give some groups distinct advantages. However, the major innovations in AI are not going to come from computers–they are going to come from the human mind. A person without access to a supercomputer (or the experience on how to program it effectively) may none-the-less have an idea that could work if they had the resources to demonstrate it. Since it is impossible to give every researcher all the money they want, we must find ways to quickly validate theories with minimal resources.

Image by Mukumbura

A benchmark result on a problem set that requires a multi-million dollar super computer is only assessable to a handful of wealthily participants–and they want it that way. Problems that can be solved with a single PC are easy to find and offer a quick reality check to compare against established methods. While it may not necessarily be the case that ‘if you can solve a small benchmark that you can solve a large benchmark’, it is the case that if you can’t solve a small benchmark then you cant solve a larger one. There is a ridiculous claim I see again and again, usually from those with disproportionately large computing resources: “Perhaps if we simulate at scale something emergent will happen”. This is ridiculous, and I offer my translation: “Perhaps if you could give us a lot of money then we can play with our expensive toys and avoid having to think”.

Consider Physics

The problem of intelligence is intimately linked to learning, and learning is an intensive memory-processing operation. There is good reason machine learning is currently experiencing a renaissance, and it does not have much to do with better algorithms. It has to do with better computational power in the form of GPUs. The faster a researcher can get a result, the faster they can learn from their mistakes and the better the algorithms get. This begs the question:

Can an arbitrary mathematical algorithm be considered a solution to AI if one cannot also show a path to efficient physical implementation?

This question can be seen as a sort of ‘appeal to realism’, since the goal is not the algorithm but rather the effect of the algorithm.

A learning algorithm is unavoidably linked to the physics of computation due to the separation of memory and processing. If one ignores this fact their chances of failure increase substantially because their solution does not include a big piece of the puzzle. A solution to AI will be simple, robust and inexpensive to purchase and operate. When one considers the adaptive power problem and its implications to large-scale learning systems, it becomes clear that some strategies to AI are mostly shots-in-the-dark, blissfully unaware that their path has a high chance of failure in the mid to long term. You can’t beat physics, so may as well let it guide you!

Don’t Fall for the PR

“And it’s ironic too because what we tend to do is act on what they say and then it is that way.” –Jem

There is a force much more powerful than science or religion. It is called business. Most companies are under tremendous pressure to keep the money rolling in, maintain a positive public perception and keep their stock price high. Technology companies and university are in an ever growing competition to get the attention of funding agencies. Scientific or technological breakthroughs seem to blindside us every day, shallow on details but high on promise. This is the business of innovation.

The more money or influence a company has, the more they promote their innovations and distract from their failures. They drown-out those with smaller budgets (or no budgets). Money translates into advertising, and one does not have to advertise one’s failures. The result is that we constantly hear about all the amazing innovations of large tech companies but we rarely hear about the inadequacies, problems, or outright failures. We almost never hear about the amazing ideas and accomplishments of the little guys. While most companies do not outright lie, they all withhold and distort information. In many cases, it is not even intentional. When ideas move from the research to the marketing department, technical caveats are necessarily lost. When it comes to impressing those individuals that make funding decisions, the ability to have your technology featured on the front page of CNN has a dramatic effect on your positive perception. Given two research groups–one that made it to the front page of a big news network and another one that did not–who will be seen as more capable in the eyes of the funding agencies? If you were a news network and you had to choose between two press-releases–one from a small no-name company and another that purchases $5 million in adds per year–which one would you cover? The situation compounds itself as journals become involved. Knowing that the work of a corporation will be backed by substantial marketing, and hence result in more paid downloads of their papers, it is simply good business to accept the work of large corporations over Joe Average. As a business decision, it would be illogical to do otherwise. In the world of technology the jury can be (and routinely is) bought. Technology companies use marketing to claim territory, scare off the competition, and impress government funding agencies. Don’t fall for it. Ask yourself: what are they not saying?

New ideas can be hard to understand, not because they are complicated but because they are new. To get around this problem we tend to value information from some sources higher than others. The same claim of “we have discovered a path to general artificial intelligence” will be received differently coming from Google or Joe Average. The reality is that there are many, many more “Joe Averages” in the world, and the solution to AI is not going to come from a computer–it is going to come from a human mind. Think about that. Apple came from two guys in a garage. HP came from two guys in a garage. Google came from two guys in a garage–and their first storage computer was built from legos. Notice a pattern?

Leave a Comment