Knowm Inc was founded to develop memristive machine learning hardware and promote memristor science.

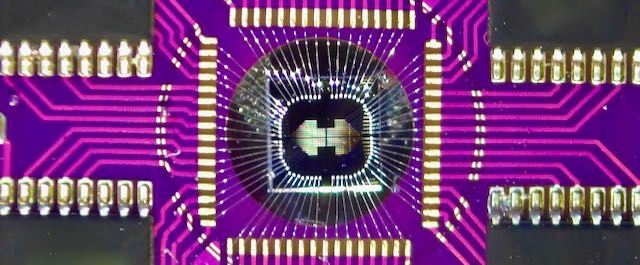

Knowm Memristors

The Knowm memristor material stack is based on mobile metal ion conduction through a chalcogenide material that has undergone a metal-catalyzed chemical reaction that creates channels which constrain the flow of metal ions. The resistance is related to the amount of metal located within the active channel layer. Doping materials in the active layer enhance and optimize properties such as switching speed, switching energy, endurance, data retention, and incremental sensitivity. Knowm Memristors are available for sale and are shipping worldwide. As of September 2020, we’ve successfully produced memristor crossbars with resistance programming capabilities. The soon-to-arrive kT-RAM server will soon allow for cloud access to dozen to hundreds of crossbars for researcher to play around and test memristor based synapses, neurons and crossbars.

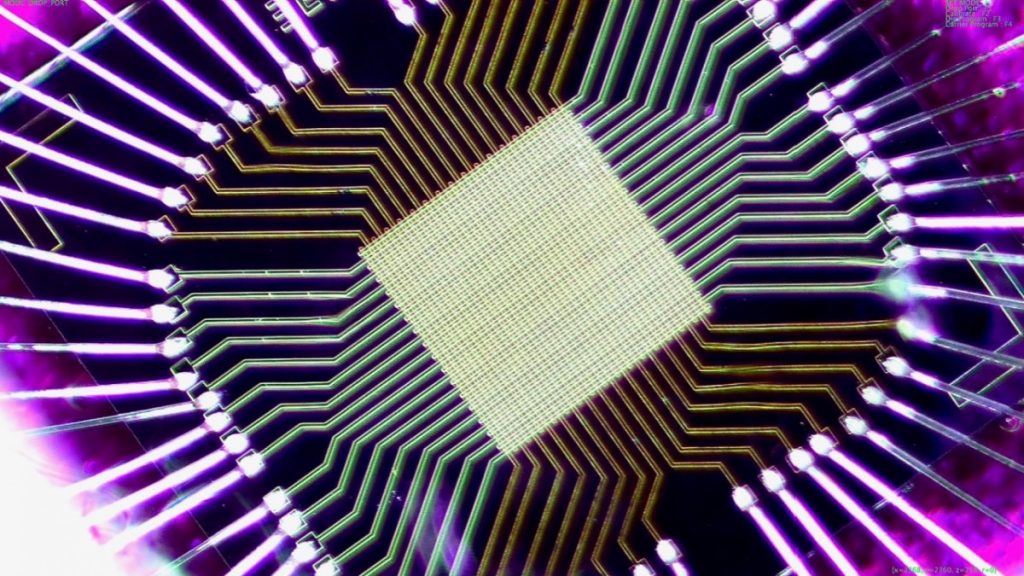

Knowm Technology Stack

The kT-RAM Technology Stack is a specification for differential-pair memristor synaptic processors that goes from memristors to distributed machine learning applications. The stack allows separate groups to specialize at one or more levels of the stack where their strengths and interests align. Improvements at various levels can propagate throughout the whole technology ecosystem, from materials to markets, without any single participant having to bridge the whole stack.